- Vulnerable U

- Posts

- Biggest Supply-Chain Attacks in History, Back to Back to Back ...

Biggest Supply-Chain Attacks in History, Back to Back to Back ...

(Editor’s note: Sources for this report are at the end)

What the hell is going on in cybersecurity recently? I am exhausted. I haven't been able to keep up. We have the biggest supply chain hacks back to back to back to back going on, not even all by the same threat actor.

We've got cloud code leaking source code. We got Cisco leaking source code. One of those was a mistake. The other one was a hack by this prolific supply chain hacker going on right now. We have a package in NPM with 100 million weekly downloads that started distributing malware. And when you get these supply chain attacks, there's a bunch of ripple effects.

Has Pandora's box opened in cybersecurity? How much is AI to blame for this? And how much of this is actually just the fact that we built a lot of our Internet security on top of a house of cards of underfunded open source software?

I'm not blaming open source software. Of course, open source software is awesome. I'm saying it's underfunded and under helped. You got these critical pieces of infrastructure that just don't have the means to do all the security stuff and stay on top of this stuff as they need to. And even when they do, this shit is really sneaky right now.

Attackers are getting in via really sneaky ways. So let's look at a timeline:

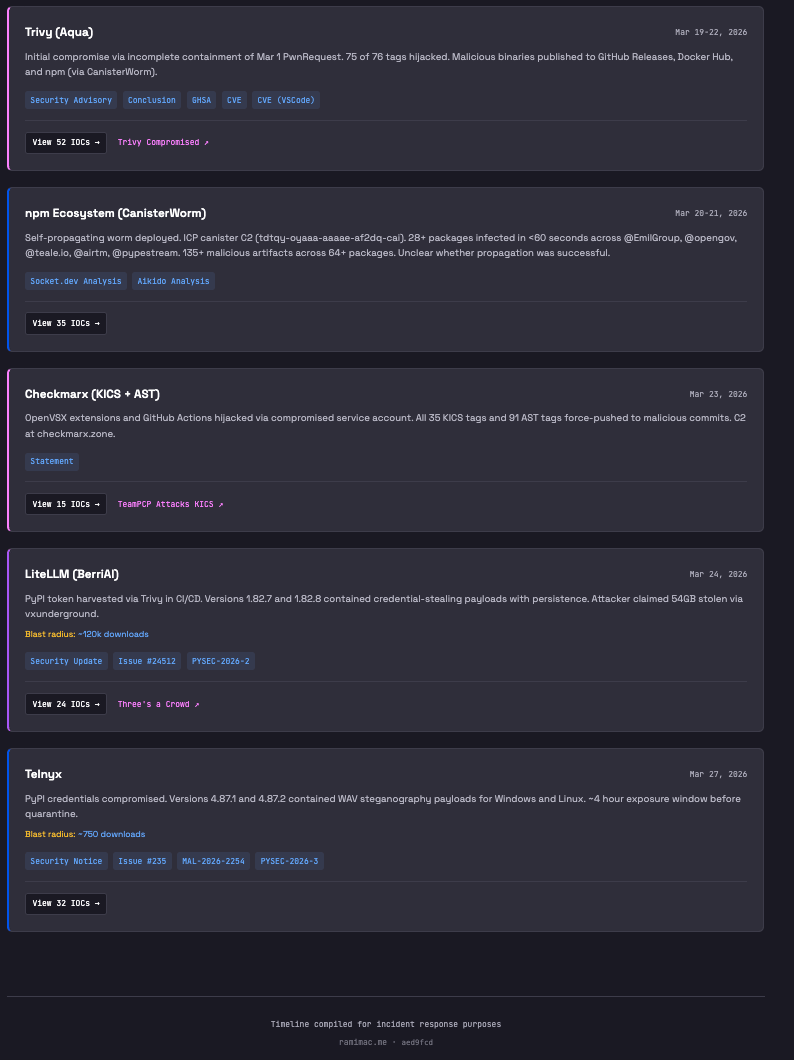

Timeline by Rami McCarthy, Principal Security Researcher at Wiz

TeamPCP vs. Trivy

One of the groups at the center of this mess is TeamPCP. Researchers have tied them to a string of supply chain attacks that unfolded across late February and March 2026, with Trivy becoming one of the earliest and most important compromises in the chain.

Trivy matters because it is one of the most widely used open source security scanners in cloud-native environments, and a lot of teams run it automatically inside CI/CD to scan containers, repos, and infrastructure before code ships.

The March 19 incident was not just “Trivy got hacked through GitHub” in a generic sense. Aqua said the attack grew out of an earlier late-February breach, where attackers exploited a misconfiguration in Trivy’s GitHub Actions environment and extracted a privileged token. Aqua disclosed that first incident on March 1 and rotated credentials, but later said the cleanup was incomplete, which let the attackers keep enough access to come back and weaponize Trivy’s release and automation pipeline.

That is what made the next step so dangerous. On March 19, the attackers used compromised credentials to publish a malicious Trivy v0.69.4 release, replace all 7 tags in setup-trivy, and force-push 76 of 77 version tags in trivy-action to malicious commits. That meant organizations referencing those trusted tags in GitHub Actions could pull attacker-controlled code straight into privileged CI/CD runs. Aqua’s advisory says the malicious code stole secrets from runners before the legitimate scan executed.

That is the real story here: security tooling that normally runs with broad access got turned into a credential harvester. The malicious payload targeted GitHub Actions runners and was designed to dump process memory, harvest SSH keys, and exfiltrate cloud credentials and Kubernetes tokens. So this was a compromise of a trusted scanner embedded deep in automated build and release workflows.

There was also a separate Trivy-related incident earlier in the same broader timeline: a malicious Trivy VS Code extension release distributed through OpenVSX. Aqua’s advisory for that issue says version 1.8.12 contained malicious code meant to abuse local AI coding agents and exfiltrate sensitive information. So it is fair to say Trivy was hit more than once in this overall campaign, but it is more accurate to describe these as distinct incidents affecting different parts of the Trivy ecosystem rather than one single compromise that happened “twice” in exactly the same way.

The Jumping Off Point

There was only one clean trivy-action tag left: 0.35.0. But not because the attackers used it as a launch point. Aqua says it survived because GitHub immutable releases had already been enabled for that tag before the attack. Everything else got uglier fast: the attackers force-pushed 76 of 77 trivy-action tags, replaced all 7 setup-trivy tags, and pushed a malicious v0.69.4 Trivy release.

That was the real jumping-off point. Once TeamPCP turned Trivy’s release pipeline into a credential harvester, they were no longer just compromising one tool. They were harvesting secrets from the CI/CD environments running it. Aqua says the malware executed before the legitimate scan and pulled secrets from runner memory and the environment.

Less than 24 hours later, the attack jumped into npm. Aikido detected what it named CanisterWorm on March 20 at 20:45 UTC, and Mend says stolen npm tokens from the Trivy stage became the launchpad. From there, the worm used those tokens to figure out which maintainer account it had landed on, enumerate every package that account could publish, bump versions, and push poisoned releases across whole scopes.

Around the same time, TeamPCP was also tied to other escalation activity, including repo tampering inside Aqua’s GitHub org and destructive operations against exposed infrastructure configured for Iran. Those are part of the broader campaign, but they should be described separately from the npm worm itself.

And the cleanup took longer than one day. Aqua says the first malicious artifacts were removed on March 19–20, but a second Docker Hub wave stayed live until early March 23 UTC. So we’re looking at multiple windows of compromise across several days.

Ripple Effects Reach Checkmarx, LiteLLM

Then the ripple effects start. One of the first downstream hits landed at Checkmarx — more specifically, in the checkmarx/kics-github-action and checkmarx/ast-github-action workflows, along with two OpenVSX plugins. That matters because these kinds of security tools often run inside CI/CD with broad access to build runners, repo tokens, and cloud credentials. So the danger here wasn’t just that “another scanner got hit.” It was that poisoned security tooling was now running inside trusted pipelines with privileged access.

Then came what may be the most consequential second-order effect of the Trivy compromise: LiteLLM on PyPI. This is where the campaign jumps out of npm and into Python’s package ecosystem. TeamPCP published malicious versions 1.82.7 and 1.82.8 of the real LiteLLM package, and PyPI later quarantined them. LiteLLM is widely used as a single interface for multiple model providers, so it often sits close to valuable environment variables and service credentials.

The malicious LiteLLM packages were built to harvest secrets from the host they landed on like environment variables, SSH keys, cloud credentials, Kubernetes tokens, and database passwords. So the point isn’t that LiteLLM was literally routing all of those secrets through itself. It’s that compromising a package like LiteLLM gives attackers a shot at the systems and pipelines where those secrets already exist. LiteLLM also said its official Proxy Docker image was not affected.

From there, the campaign appears to shift from collection to monetization. Unit 42 reported that TeamPCP announced a partnership with the Vect ransomware group on BreachForums, suggesting an effort to turn stolen data and access into extortion. I’d word that carefully, though: that’s a threat-actor claim reported by researchers, not public proof that every victim was hit by Vect ransomware.

Second-Order Impacts: Cisco, Claude Code, and Mercor

By the end of March, Cisco was being reported as one of the downstream victims of the Trivy compromise. BleepingComputer reported that attackers used credentials stolen through the malicious Trivy GitHub Action to access Cisco’s build and development environment, steal source code, and abuse AWS keys from a small number of accounts. That is the real second-order risk here: once a poisoned security tool lands inside a privileged pipeline, the blast radius moves from the tool itself to every environment that trusts it.

Around the same time, Claude Code source code leaked, and a lot of people immediately wondered whether it was connected to Trivy or LiteLLM. Publicly, it does not look like it was. Anthropic said the release accidentally included internal source code because of a packaging mistake caused by human error, not a security breach, and that no customer data or credentials were exposed.

Mercor looks like a more credible downstream impact from the LiteLLM side of the chain. Mercor told TechCrunch it was “one of thousands of companies” affected by the LiteLLM compromise linked to TeamPCP. Separately, Lapsus$ claimed it stole roughly 4TB of Mercor data, including source code, database records, video interviews, and identity documents. That alleged scope is serious, but it should still be framed as an attacker claim unless Mercor independently confirms the totals.

The Axe Falls on Axios

Then the story gets even messier, because Axios was hit and they have 100M weekly downloads on npm. But this appears to be a separate incident, not another branch of the TeamPCP/Trivy campaign. Google said the Axios compromise was carried out by the North Korea-linked actor it tracks as UNC1069, while Microsoft attributed the infrastructure and activity to Sapphire Sleet, another North Korea-linked tracking label. Google also said this Axios attack was not connected to the major npm supply chain attack from the previous week.

The important nuance is that Axios itself was not universally “pushing malware everywhere.” Two specific npm releases were malicious: [email protected] and [email protected]. In both cases, the attackers inserted a fake dependency, [email protected], which used a postinstall hook to quietly fetch and deploy a cross-platform RAT on Windows, macOS, and Linux. Google and Microsoft both say defenders should treat affected hosts as compromised, rotate secrets, and roll back to safe versions such as 1.14.0 or 0.30.3.

Because Axios sits so deep in the JavaScript ecosystem, the risk is not limited to teams that knowingly installed it. Google warned that the ripple effects could spread through other packages and downstream environments, and Microsoft noted that projects auto-updating Axios could have silently pulled the malicious releases during the exposure window.

We’re not going to know all the impact of this one for a bit. North Korea is not the same as a cybercrime group like TeamPCP - they can quietly sit on the secrets they’ve sucked up and figure out how to best use them. Most likely they will be targeting large crypto theft as that is generally their main goal.

A World of Hurt

The bigger issue now is cleanup. Across the Trivy, LiteLLM, and Axios incidents, defenders are being told to audit dependency trees, isolate affected hosts, pin known-good versions, clear caches, and rotate any secrets present on compromised systems. Google warned that hundreds of thousands of stolen secrets could already be circulating as a result of these attacks.

So the practical takeaway is less “everything is doomed” and more “the follow-on damage may keep unfolding for a while.” That is what makes these supply chain attacks so nasty: by the time the malicious package is pulled, the real problem may already be the credentials, tokens, and access that were stolen before anyone realized they were exposed.