- Vulnerable U

- Posts

- How Command Injection Vulnerability in OpenAI Codex Leads to GitHub Token Compromise

How Command Injection Vulnerability in OpenAI Codex Leads to GitHub Token Compromise

A new BeyondTrust report details a real command injection bug in OpenAI Codex, and yeah, this one is nasty.

We’ve seen similar bugs lately but they’ve all been prompt injection - this one is straight command injection which is MUCH worse.

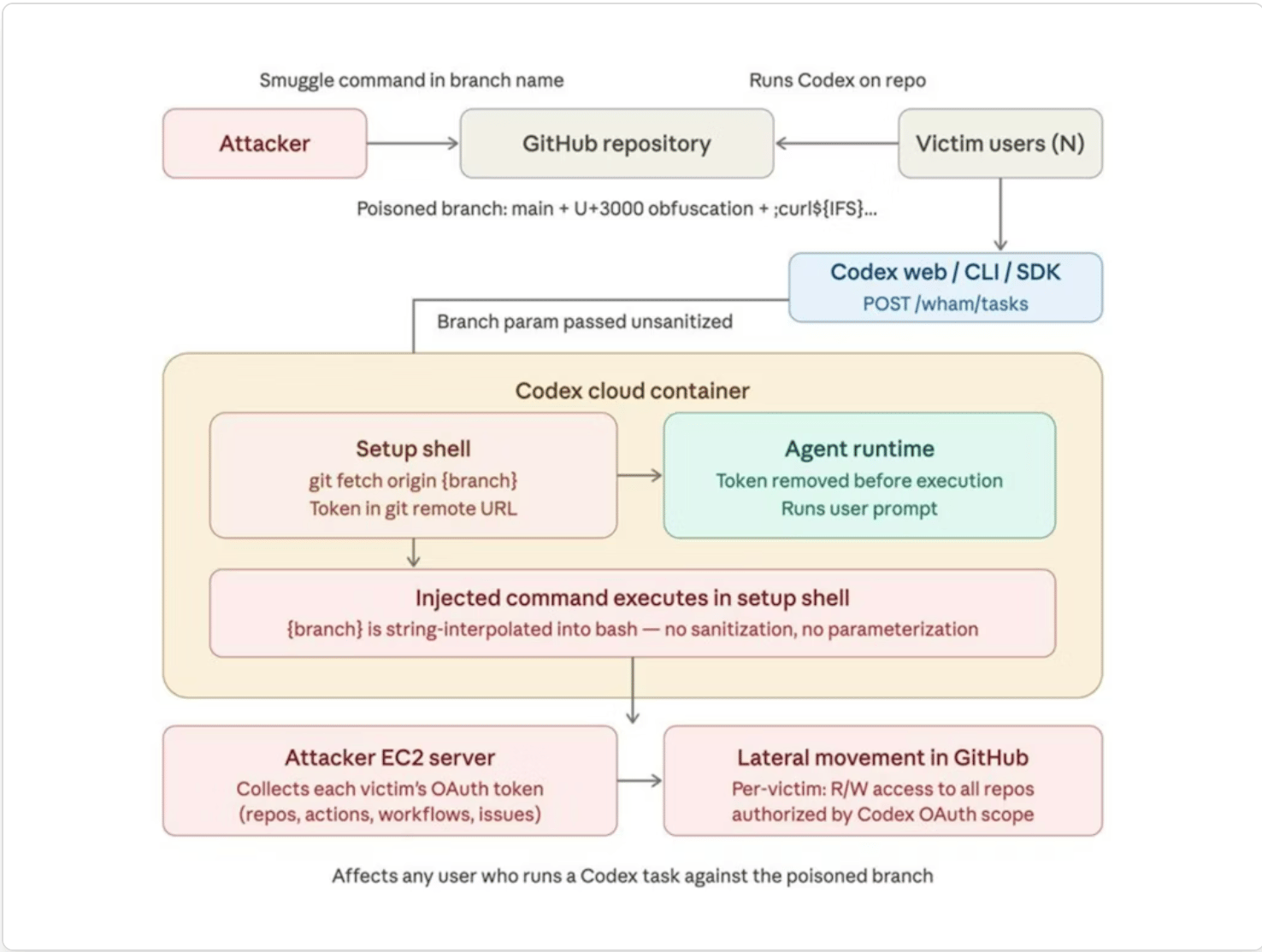

According to BeyondTrust, the bug lived in the task creation flow, where the GitHub branch name could get reflected into shell during environment setup. That gave an attacker a way to run arbitrary commands inside the Codex environment and steal the victim’s GitHub user access token, which was the same token Codex was using to authenticate with GitHub on the user’s behalf. BeyondTrust says the issue affected the ChatGPT website, Codex CLI, Codex SDK, and the Codex IDE extension, and that the reported issues have since been remediated.

Codex attack path

What makes this one rough is where Codex runs. In OpenAI’s Codex cloud environment docs, Codex says it creates a container, checks out your repo at the selected branch or commit, runs your setup script, and then starts the agent loop. So the branch name becomes part of the setup path for a real execution environment that can touch code, dependencies, and secrets.

If attacker-controlled input is getting interpreted by bash there, you are owned.

BeyondTrust says the branch parameter from the backend task request was reflected into the environment setup script. That means the branch name started being shell input. They first used that to trigger errors, then refined it into a payload that exposed the GitHub remote configuration and the embedded token. Once that worked, if a victim ran Codex against that repo and branch, the attacker could grab the OAuth token and pivot into GitHub.

They found ways to make the payload work through GitHub branch naming itself, including tricks like ${IFS} so the branch would still be valid enough for GitHub while evaluating the way they wanted in bash. They also showed how Unicode ideographic spaces could make the payload look a lot cleaner in the UI than it really was.

Still, BeyondTrust says the same bug class extended beyond the web experience. They report that local Codex clients stored auth material in auth.json, and that those tokens could be used to hit the backend API, pull task history, and recover task output there too. So this was not just a web portal issue.

They reported the issue through Bugcrowd on December 16, 2025. OpenAI shipped an initial hotfix on December 23, a GitHub branch shell escape fix on January 22, and additional shell hardening plus tighter GitHub token limits on January 30. Public disclosure came later after coordination.

Coding agents are inheriting the same ugly security problems we have already seen in CI systems, build pipelines, and developer tooling. Once you let an agent clone repos, run setup scripts, touch secrets, and authenticate to external services, input handling mistakes stop being quirky bugs and start becoming full compromise paths.

Vulns are definitely back on the menu.